Are you facing the issue in your organization

about soft deleting the high volume of records in the Informatica MDM? Are you looking

for the best possible way to soft delete this bulky volume of records? Are you looking for

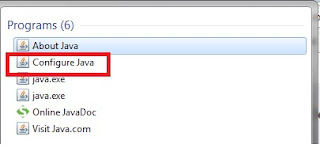

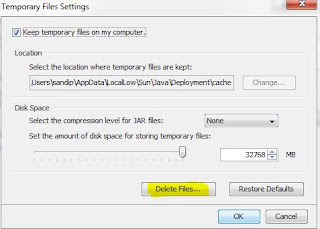

information about how to use the Services Integration Framework (SIF) – Delete API? This article examines the basic concept of SIF – Delete API and how to

implement Java code for soft deleting records using SIF API.

Business Use Case:

Assume that MDM

implementation is completed and daily jobs are running well in production. However, on a particular

day, the ETL team loaded the wrong set of data in the MDM landing tables which results in records going from landing to staging and from staging to Base Object. Now, bad data is

present in the Base Object, Xref and history tables and your business would like to soft

delete these records. These options below are available to resolve this problem:

a) Physically delete the records using ExecuteBatchDelete API.

b) The ETL team can soft delete the record and load in landing. It can then be processed using stage and load job.

c) Soft delete records using SIF Delete API.

Out of all these, option ‘Soft delete

records using SIF Delete API’ is the easiest to implement to handle business

needs.

What is SIF Delete API?

The SIF Delete API can delete a base object

record and all its cross-reference (XREF) records. It can also be used to

delete any specific XREF record. State of record in Base Object table will be

reset when a XREF record is deleted and it is based on the higher precedence

basis. The records undergo the following changes when records are deleted:

- Records in the ACTIVE state are set to the DELETED

state.

- Records in the PENDING state are hard deleted.

- Records in the DELETED state are retained in the DELETED state.

Sample API Request:

DeleteRequest request = new DeleteRequest();

RecordKey recordKey = new RecordKey();

recordKey.setSourceKey("4001");

recordKey.setSystemName("SRC1");

ArrayList recordKeys = new ArrayList();

recordKeys.add(recordKey);

request.setRecordKeys(recordKeys);

request.setSiperianObjectUid("PACKAGE.CUSTOMER_PUT");

DeleteResponse response = (DeleteResponse) sipClient.process(request);

RecordKey recordKey = new RecordKey();

recordKey.setSourceKey("4001");

recordKey.setSystemName("SRC1");

ArrayList recordKeys = new ArrayList();

recordKeys.add(recordKey);

request.setRecordKeys(recordKeys);

request.setSiperianObjectUid("PACKAGE.CUSTOMER_PUT");

DeleteResponse response = (DeleteResponse) sipClient.process(request);

Response Processing:

The getDeleteResults() returns the list of

RecordResult objects which contains all necessary information such as the record key with ROWID_XREF, PKEY_SRC_OBJECT, ROWID_SYSTEM etc which can be retrieved as below.

DeleteResponse response = new DeleteResponse();

for(Iterator iter=response.getDeleteResults().iterator();

iter.hasNext();)

{

//iterate through response records

RecordResult result = (RecordResult) iter.next();

System.out.println("Record: " + result.getRecordKey());

System.out.println(result.isSuccess()?"Success","Error");

System.out.println("Message: " + result.getMessage());

}

for(Iterator iter=response.getDeleteResults().iterator();

iter.hasNext();)

{

//iterate through response records

RecordResult result = (RecordResult) iter.next();

System.out.println("Record: " + result.getRecordKey());

System.out.println(result.isSuccess()?"Success","Error");

System.out.println("Message: " + result.getMessage());

}

Sample Code:

private void

deleteRecord(List xrefIds) {

try {

List

successRecord = new ArrayList();

List

failedRecord = new ArrayList();

DeleteRequest

request = new DeleteRequest();

ArrayList

recordKeys = new ArrayList();

Iterator

itrRowidXref = xrefIds.iterator();

while (itrRowidXref.hasNext()) {

Integer

rowidXrefValue = (Integer) itrRowidXref.next();

RecordKey

recordKey = new RecordKey();

recordKey.setRowidXref(rowidXrefValue.toString());

recordKeys.add(recordKey);

}

request.setRecordKeys(recordKeys);

request.setSiperianObjectUid("BASE_OBJECT.C_BO_CUST");

AsynchronousOptions

localAsynchronousOptions = new

AsynchronousOptions(false);

request.setAsynchronousOptions(localAsynchronousOptions);

DeleteResponse

response = (DeleteResponse) sipClient.process(request);

if (response != null) {

for (Iterator iter = response.getDeleteResults().iterator();

iter.hasNext();) {

RecordResult

result = (RecordResult) iter.next();

if (result.isSuccess()) {

successRecord.add(result.getRecordKey().getRowidXref());

}

else {

failedRecord.add(result.getRecordKey().getRowidXref());

}

}

}

System.out.println("Failed

Records : " + failedRecord);

} catch (Exception e) {

e.printStackTrace();

}

}

Details about implementation are explained in this video: